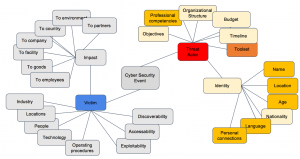

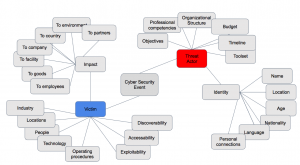

The piece of information or intelligence that you get from an intelligence provider is known as an “indication”. For good and ill, these little guys have become a buzzword over the past 7 or so years. Pretty much every security practitioner today is familiar with the concept of “IOC” or “indication of compromise”.

Unfortunately the industry has been quite distracted and parochial about the broader concept of indications.

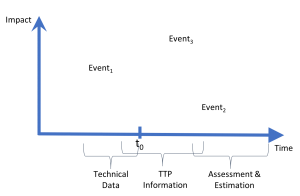

IOCs generally fall under what I call the “technical data” category. These are IP addresses, file hashes, email senders and subject lines, etc. Supposedly you can put these externally provided items into your internal sensors to see whether you were hit by the same adversaries.

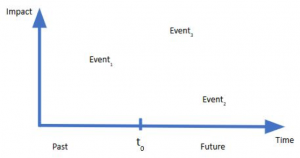

IOCs are essentially reactive — that is, they are backwards looking. Ideally they were learned or observed as part of an attack that occurred somewhere else.

To be successful at cyber risk intelligence over the long term you need to expand beyond indications of compromise (IOCs) to also consider (or maybe even prioritize):

- Indications of targeting (IOT)

- Indications of adversary interest (IOI)

- Indications of adversary opportunity (IOO)

These types of indications can be extracted from the intelligence feed types noted previously:

- Technical data

- TTPs

- Assessment and Estimation

- Vulnerability

The next post will discuss the paramount importance of indication extraction.