Now, based on my experience working in the commercial cyber threat intelligence space for many years, I bet you didn’t really go through the effort of identifying the question like I asked you to in my last post.

So, I will repeat the question, then read your mind.

The question: What question if you knew the answer to it, would most significantly improve your security operations?

Think hard… I will wait…

Now for the mind reading: It’s probably something like:

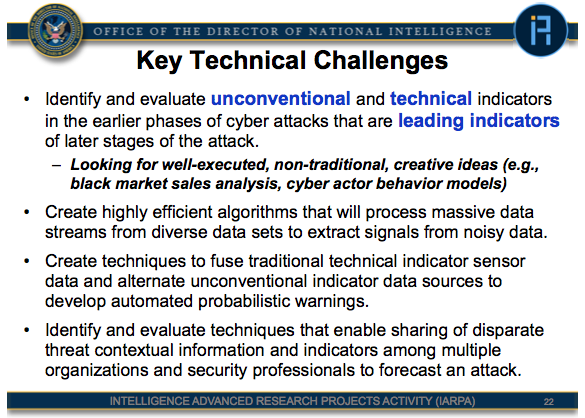

When will my organization be the victim of a significant cyber incident?

So, you thought it. You know you did. But there was a bit of cognitive dissonance because when it crossed your mind, you also thought “I can’t ask that. No one can know the future.”

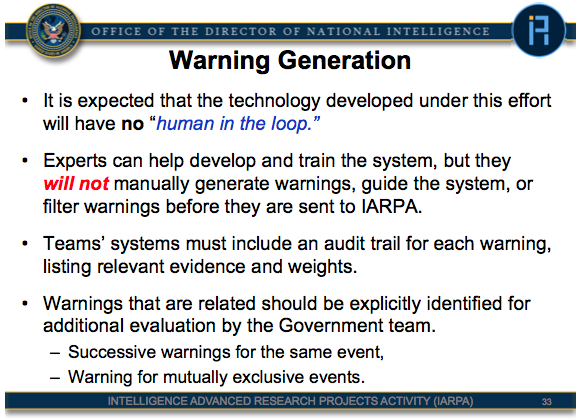

But that is where the deep magic of intelligence really begins.